Support Vector Machine in Machine Learning: Complete Guide

Welcome to this in-depth guide covering the immensely powerful support vector machine in machine learning. If you are exploring the landscape of artificial intelligence, mastering the support vector machine learning algorithm is a required milestone. Let's delve into its definition, internal architecture, core mathematics, and how you can apply it in real-world scenarios.

1. Support Vector Machine Definition & What It Is

To begin, let's establish a clear support vector machine definition. A Support Vector Machine (commonly abbreviated as SVM) is a highly robust, supervised support vector machine model primarily used for complex support vector machine classification and support vector machine regression challenges.

The core objective of the support vector machine svm algorithm is to plot a given support vector machine dataset as coordinates within an n-dimensional space (where n is the number of features you have) and discover the absolute optimal "hyperplane"—a rigid boundary that distinctly segregates the data points into separate classes.

2. The Support Vector Machine Architecture & Working

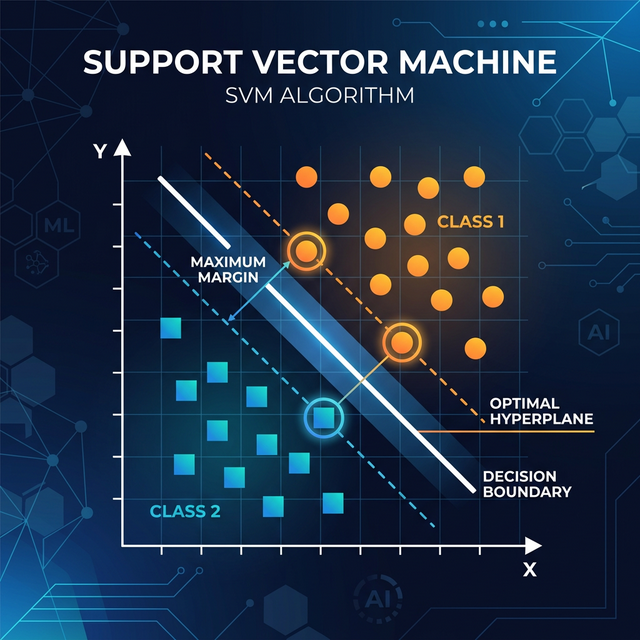

Understanding the support vector machine working principle requires looking at its internal components. The fundamental goal in the support vector machine architecture is to maximize the margin between the data classes. The model finds the boundary by focusing purely on the critical data points stationed nearest to the opposing class. These critical points are called "Support Vectors." They function similarly to structural pillars—if you move them, the boundary line itself moves.

When you look at a support vector machine graph, you'll see a solid line (the hyperplane) bounded by two dashed lines outlining the margin. The algorithm dynamically adjusts this plane until the space between those dashed lines is as wide as mathematically possible without violating classification rules.

3. The Support Vector Machine Mathematics & Formula

The support vector machine mathematics relies heavily on linear algebra and vectors. To find the optimal hyperplane, we enforce an optimization condition heavily reliant on Dot Products. The basic conceptual support vector machine formula representing a linear hyperplane is:

Where:

- w: The weight vector, sitting strictly perpendicular (normal) to the hyperplane.

- x: The input feature vector.

- b: The bias offset, determining the distance of the hyperplane relative to the origin.

4. Support Vector Machine Kernel Trick (RBF)

What happens when you encounter a dataset that is completely non-linear and perfectly circular, where a straight line cannot classify it? This is where the magic of the support vector machine kernel steps in. A kernel function mathematically maps low-dimensional data into a higher-dimensional space where drawing a straight flat hyperplane becomes possible.

The most famous and powerful of these is the support vector machine rbf kernel (Radial Basis Function). Instead of relying on linear angles, the RBF kernel gauges the distance between points, creating complex, wrapping boundary lines in the original feature space. You can readily test the RBF kernel using any support vector machine online playground tool.

5. Support Vector Machine for Regression (SVR)

While extremely popular for classification (identifying categories like cat vs. dog), support vector machine in ml is equally adept at predicting continuous numerical figures. This specialized variant is called Support Vector Regression (SVR).

Unlike standard linear regression that penalizes every single error, support vector machine for regression creates a "tube" (the epsilon-margin) around the hyperplane. It completely ignores any error safely residing inside the tube, giving the algorithm remarkable resistance against unwanted outliers.

6. Support Vector Machine Applications

Due to its high accuracy in high-dimensional spaces, the toolchain holds massive respect within support vector machine data mining pipelines. Key support vector machine applications include:

- Support Vector Machine Trading: Widely utilized in algorithmic stock market trading because it excels at predicting price direction movements based on hundreds of complex, overlapping historical technical indicators.

- Bioinformatics: Classifying genetic codes, protein structures, and categorizing cancer tissue samples from high-dimensional datasets.

- Text Categorization: Parsing natural language, analyzing product review sentiment, and sorting articles into news categories.

7. Python Implementation Example

Here is a concise implementation of a support vector machine learning classifier

using Python's scikit-learn relying on the powerful RBF kernel.

# 1. Import necessary components

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.svm import SVC # Support Vector Classifier

from sklearn.metrics import accuracy_score

# 2. Dummy Training Dataset: Predicting user action (0 = No buy, 1 = Buy) based on age & income

X = np.array([[22, 20000], [25, 30000], [45, 80000], [50, 100000], [21, 15000], [40, 75000]])

y = np.array([0, 0, 1, 1, 0, 1])

# 3. Split the data

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.3, random_state=42)

# 4. Initialize SVC using the robust RBF Kernel

model = SVC(kernel='rbf', C=1.0)

# 5. Fit the Support Vector Machine Model

model.fit(X_train, y_train)

# 6. Predict results

predictions = model.predict(X_test)

# 7. Evaluate

print(f"SVM Accuracy: {accuracy_score(y_test, predictions) * 100}%")Conclusion

The support vector machine ml algorithm remains a pillar of classical machine learning. Whenever you visualize a support vector machine logo, picture that crisp hyperplane splitting the data! By understanding both the classification (SVC) and regression (SVR) modes alongside the brilliant Kernel Trick, you arm yourself with an algorithm natively immune to overfitting.

Discussion